In 2018. I wrote an editorial about how deep fakes should be a major concern for law enforcement. This week two things reminded me of this article. A Maine police agency is being criticized for posting an evidentiary image that was edited with ChatGPT to its Facebook page. And on June 29 (2025), the New York Times ran an article asking readers if they could identify the AI produced video. Even though we were told in advance that some of the 10 videos were AI generated, none of my friends have scored higher than 70% at identifying the deep fakes. What I wrote about in 2018 is now here. Every law enforcement supervisor and commander needs to understand the implications of this technology.

Three months ago Google demonstrated a stunning new artificial intelligence capability for its Google Assistant smart speaker. The technology branded Duplex can autonomously call a hair salon and schedule an appointment, or a restaurant and make a reservation, and perform other similar tasks all with a very human-sounding voice. Duplex even reacts to what the person says on the other end of the phone with no discernible lag and says "uh" and "hum" like a typical American. If it was programmed to do so, Duplex could call your 911 dispatch center right now and odds are no one would know they were talking to a machine.

But Duplex calling 911 is the least of your worries as more and more AI products reach the public. What you really have to worry about is fake video of you doing things you didn't do and saying things you didn't say. You will also have to worry about fake video implicating the wrong person in a crime or showing a guilty man lounging on the beach 500 miles away rather than robbing your local bank. We are about to enter an era where all video and audio evidence may be more suspect than it's ever been.

Fortunately, the body camera and in-car video companies long ago incorporated technology that prevents anyone from altering a video. Unfortunately, a percentage of the public inclined toward taking anti-police propaganda as gospel already believes that police departments doctor videos to get the evidence they need to exonerate officers after a controversial shooting. So imagine what will happen if in the very near future every controversial police shooting is posted on the Web as multiple videos showing totally different actions

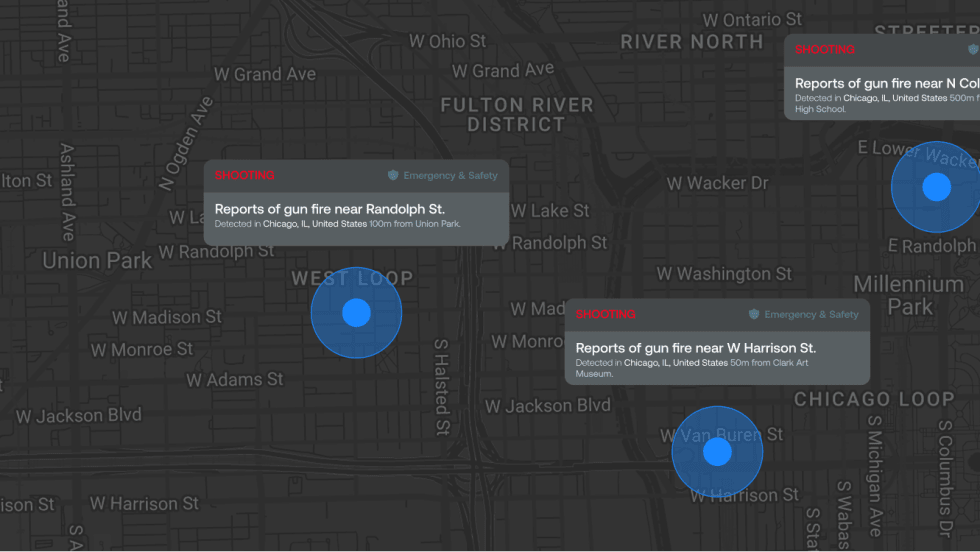

For example, last month in Chicago, a police officer shot a barber after seeing the man allegedly reach for his waistband toward a concealed firearm. Officers had originally stopped the man because they suspected he was carrying because of a visible bulge at his waist. He reportedly resisted, pulled away from the officers, and then the sequence of events that led to his fatal shooting occurred. This shooting led to some unrest, including some "violent protesting," and some officers were injured by thrown objects. As of this writing, the city is still tense. But imagine how tense it would be if the police video showed the man was armed, which he was, and an equally convincing video showed he was unarmed.

Seamless video manipulation using AI algorithms is about to become a major challenge in many fields, including law enforcement. Crude apps are already available that can do some pretty remarkable video manipulation. For example, people have manipulated porn videos swapping the faces and voices of the actress for those of mainstream actresses. They've also created a bogus video of Barack Obama spewing obscenities about his successor. These videos are called "deep fakes."

It's pretty easy to determine that the current generation of deep fakes has been manipulated. But the thing about AI-based software is that by its very definition it learns, it gets better with use. So those telltale blurs and other artifacts that now reveal these things are phony are going to go away. And it's estimated that very soon even top experts in the field of video editing will have trouble determining what is real and what is fake.

The good news is your evidentiary videos will probably stand up in the court of law. The bad news is they will be in doubt—even more so than they are now—in the court of public opinion.

What we are about to see is an arms race between creators of propaganda deep fakes, including sophisticated foreign intelligence agencies and unsophisticated trolls, and producers of tools for proving the videos are not genuine. And for the first few years of the coming AI revolution, the deep fake creators are going to have a head start.

The only advice I can give you to lessen the pain of this coming nightmare is to make sure your video system is running when it's supposed to. Because your best hope for combating deep fakes and the damage they will do to your reputation, your department, and your profession is to make sure you have an official video. If you don't, I assure you the deep fake purveyors will make one for you, and you won't like what it shows.