Photo: Getty Metamorworks

Photo: Getty Metamorworks

A few weeks after the in-custody death of George Floyd in Minneapolis, one of the world’s largest companies—Amazon—surrendered its autonomy to anti-police activists and privacy radicals over an investigative tool that some officers were using to solve major felonies. Amazon’s market value at the time in June 2020 was just under a trillion dollars. Yet, the company ran out of the law enforcement facial recognition business, prohibiting law enforcement agencies from using its Rekognition solution.

Amazon’s experience two years ago is an example of how controversial facial recognition technology can be. After 9/11 the technology was supposed to be implemented by Homeland Security in airport surveillance. Limits on the accuracy of the software and privacy concerns voiced by politicians and activists made such plans less than viable. Today, facial recognition use by American law enforcement is generally confined to after-the-fact investigations.

Still any discussion of facial recognition use by law enforcement brings wails of protests from civil rights and anti-police protesters. They argue that facial recognition is biased against people of color and that means it shouldn’t be used by investigators, even if they are trying to solve a series of child murders.

The activists’ arguments against facial recognition led the Massachusetts legislature to propose a bill that would require a warrant for any law enforcement agency to run facial recognition on DMV photos. In May 2021, the warrant requirement was reduced to a court order requirement. But that legislation could be modified based on the state’s Facial Recognition Commission, which is recommending that facial recognition searches should be conducted only by the State Police.

Opponents to the technology seem to think that agencies can use a facial recognition match as grounds for arrest. Retired NYPD detective Roger Rodriguez spearheaded the development of the department’s facial identification investigation unit and has been working with facial recognition technology for more than a decade. He says matching a face is just a way to develop a lead for further investigation by other means.

Opponents of facial recognition use in police operations also rarely have a strong understanding of the capabilities of the systems. On such issues as what they claim are too many false positives on people of color they either ignorantly or willfully fail to allow for the possibility of the systems’ performance improving. Which is exactly what facial recognition tools are doing thanks to artificial intelligence technology.

Google for Faces

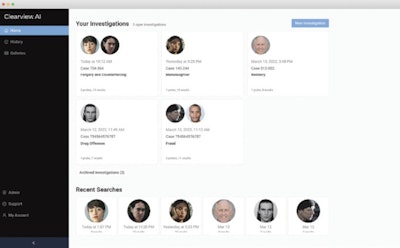

Clearview AI collects facial images from public websites, including social media sites such as Facebook, Twitter, and Instagram. Because it doesn’t rely on government databases such as driver license photos, it is particularly adept at identifying crime victims who are too young to drive or are new to the United States.Photo: Clearview AI

Clearview AI collects facial images from public websites, including social media sites such as Facebook, Twitter, and Instagram. Because it doesn’t rely on government databases such as driver license photos, it is particularly adept at identifying crime victims who are too young to drive or are new to the United States.Photo: Clearview AI

Artificial intelligence is actually in the name of America’s most controversial facial recognition provider, Clearview AI. The company’s eponymous facial recognition product gathers photos of people from public sources on the web, including social media sites like Facebook, Twitter, and Instagram. Rodriguez, who is now Clearview AI’s vice president of sales, says the software is a search engine like “Google for faces.” While Google collects and catalogs the content of web pages, Clearview AI does the same with facial images.

The technology accelerates the search for suspects, saving agencies both time and money. “Searching the Internet for facial matches (the old way) is a very labor-intensive process that requires a team of detectives to go on and find relevant information to connect the dots in their investigation. Clearview AI is a game-changer because it automates that process, yielding targeted results that are relevant to their case. They can then expand upon those leads and utilize them in their investigation,” Rodriguez says.

Early facial recognition systems relied on geometry and dimensions. AI-powered facial recognition systems use the same math, but they also learn more about faces the more faces they see. “Machine learning replicates the human brain’s capacity to remember faces,” Rodriguez says. “This produces more reliable and accurate results.”

The new AI-powered algorithms have also eliminated some of the weaknesses of earlier facial recognition systems. “In the past, the technology could not match a person to a high degree off of a profile image. We are now seeing much better results with those images and that is proving to be very important because criminals do not pose for the camera. If you catch an image of a burglar leaving a premise, you’re going to capture a partial of his face,” Rodriguez says.

Using facial recognition to identify possible suspects from crime scene photos often involves minor photo editing such as increasing contrast, brightening, color correction, sharpening, and deblurring. At many agencies that use facial recognition, investigators perform these edits in consumer image-editing tools like Photoshop. Clearview AI’s newest version of its facial recognition system incorporates a suite of image enhancement tools. These can be used to enhance images that would not yield results in their raw state. “A second opportunity to generate an identification can prevent crimes and save lives,” Rodriguez says. Having photo enhancement tools built into Clearview AI gives investigators reports of how the images were improved for court documentation. The system also maintains an audit trail of how the image was enhanced.

In vendor testing Clearview AI’s facial recognition is ranked first in accuracy for U.S.-made systems and second worldwide, only surpassed by a Chinese system, according to Rodriguez. He explains that the company’s large data set is minimizing racial disparities in results. A company spokesperson adds, “What opponents say is the algorithms are least effective in identifying women of African-American descent. What they don’t say is that the difference in accuracy is 99.7 vs. 99.8.”

Facial recognition is much more accurate than traditional law enforcement identification tools, according to Rodriguez, who points out the historic problems with witnesses. “Eyewitnesses are often experiencing undue stress and that can affect accuracy,” he says. “Facial recognition can replace that with a higher level of certainty.” One added benefit of that certainty according to Rodriguez is fewer interviews with uninvolved people. “You know exactly who you are looking for and that minimizes unnecessary police interaction [with the wrongsuspects].”

Clearview AI offers enterprise model subscriptions. Pricing is based on the size of the agency from less than 50 sworn officers to more than 5,000. Trials are available but must be approved by command staff. The company also provides training on best practices, legal matters, and even on how to address community concerns.

“Old data fuels political opposition,” Rodriguez says. “It’s important to educate the public and law enforcement about the technology and the impact it has on public safety.”

Modular AI

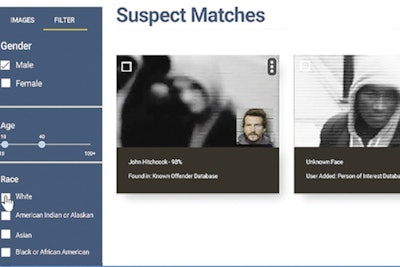

Veritone’s IDentify lets agencies quickly compare their known offenders against crime scene video footage, photos, and even sketches.Photo: Veritone

Veritone’s IDentify lets agencies quickly compare their known offenders against crime scene video footage, photos, and even sketches.Photo: Veritone

Another AI-powered facial recognition tool is Veritone’s IDentify, which can identify suspects from known offender databases. Users can limit the search to their own databases or share access with other agencies. And not only can IDentify match faces on video and photos, it can even process sketches.

IDentify is built on Veritone’s aiWare platform, an artificial intelligence operating system that incorporates technologies developed by Veritone as well as technologies licensed for use from other developers by Veritone. Jon Gacek; head of government, legal, and compliance at Veritone; says the modular nature of its products is beneficial because technologies can be swapped out based on improvements, customer needs, anddisruptions.

When IDentify was first launched, Veritone used Amazon’s facial recognition technology Rekognition. The modular nature of the product allowed the company to change to another provider just before Amazon abandoned its law enforcement facial recognition customers. “We swapped it out before Amazon pulled it,” Gacek says. “Other people who were using Amazon felt a little bit burned. They had built workflows and applications around that model and it became obsolete.”

IDentify’s users have also benefitted from the fact that the software is not nearly as controversial as some facial recognition solutions. Because IDentify’s searches are limited to known offender databases that the agency has permission to access, it doesn’t raise as many privacy concerns as some other technologies.

Gacek says IDentify is an efficiency tool. It saves investigators from the tedious and laborious process of flipping through images of known offenders trying to match them to a known offender image. “Agencies are operating under budget and resource constraints and they are asking how we can automate these processes,” he says.

IDentify is often used in tandem with another Veritone product, Illuminate. IDentify can help an investigator identify an unknown subject. Illuminate can process massive amounts of data to find all appearances of that person in video. “Illuminate is very good at culling down through a lot of data to find the needle in a haystack, “ Gacek says.

Illuminate is like IDentify a time-saver. “It can ingest all of the camera footage from a given area and instead of the officers watching it to find the subject, they can search for all of the times the subject appeared,” Gacek says. It can search not just for faces but for clothing or other traits that can be used to differentiate a subject from others in a video.

Because Illuminate can search for subject traits other than faces, it has been very beneficial to users during the COVID-19 pandemic as many people’s faces are covered by masks. “It treats humans as objects visually. So it’s not about the facial features; it’s about what the person is wearing, how they walk, what they are carrying, and other attributes,” Gacek says.

Veritone assists agencies with the use of IDentify, Illuminate, and its other public safety tools through its Customer Success Team. Because the solutions are cloud-based, Veritone’s trainers and programmers don’t have to come to the location to provide assistance. Gacek has also helped law enforcement leaders and other administrators explain the technology to local government. He adds that he has never heard of any issues with councils or commissions prohibiting the use of IDentify or Illuminate.

Success Stories

AI-powered facial recognition has been used to solve heinous crimes, including murders, rapes, human trafficking, and sexual exploitation of children.

Clearview AI says its technology has been used in many cases but many of them are still off the record. One case that the company can publicly comment on is a Department of Homeland Security investigation into images of sexual abuse of children. The only face of one of the male abusers was a single frame of video with the man in the background. DHS circulated the image to law enforcement and an investigator with an agency that uses Clearview AI ran the image through the system. The results led to a suspect in Las Vegas. He pleaded guilty and is now serving 35 years.

Because Clearview AI can search public photos on the Internet, it is very effective at identifying both suspects and victims. It is especially adept at identifying victims who don’t have government ID, including people not legally in the United States and children. “Children don’t have driver licenses,” Rodriguez says.

Veritone’s IDentify has also helped investigators cut costs, labor hours spent on cases, and solve crimes. A California agency used IDentify to automate its process for linking known offenders to criminal activity. IDentify optimized workflows for the department’s investigative teams, and the investigators were able to identify nine suspects in less than four months.

Veritone’s AI-powered facial recognition tools have also helped solve crimes against children. Two non-sworn employees of the Anaheim (CA) Police Department used IDentify to help a nearby agency close a case of sexual assault on a child.

Cynthia Espinoza, a senior office specialist in Anaheim PD’s homicide unit, was at home watching a TV news report about the Arcadia Police Department’s search for a man wanted in connection to the sexual assault of a 9-year-old girl. She took a photo of the man from the TV report and sent it to her work e-mail. The next day she and civilian investigator Lucy Hernandez ran the photo in IDentify. The result was 200 possible matches of suspects who had been arrested and booked in Anaheim. Arcadia PD detectives used this information to build a case and arrest a suspect 24 hours after they received the leads.

Not A Surveillance Tool

Examples of cases closed with leads produced by AI-powered facial recognition are evidence of the power of this technology to enhance public safety. Despite such benefits, the technology is still the target of activists who want its use by law enforcement banned. These activists tend to slam facial recognition by intentionally implying it is real-timesurveillance.

During an interview on CNN Business Clearview AI Co-Founder Hoan Ton-That defended his technology as an after-the-fact investigation tool. “No one has been wrongfully arrested or even detained [because of Clearview AI],” he said.

Veritone’s facial recognition is “just a tool to help accelerate an investigation,” Gacek says. “it’s only been used when there’s been a crime committed and there was probable cause for an investigation.”